Code is not programming

It was never about the code

2026-03-03 9d192afProgramming is older than code, but we keep talking as if they are the same thing. That confusion is now making itself apparrent with the discrourse around "AI" in software for both the zealots and the nay sayers.

Whether you like it or not, LLMs are writing more code every day, while some are claiming that this is the end of software engineers, others are asking: what does that mean for people who still want to write code by hand? The people who enjoy it, the people who find coding fun.

It is a fair question. But it contains an assumption that most people never examine: that their job was coding in the first place. To conflate coding with programming is like a woodworker mistaking skill with a chisel for the craft of building a dining table that sits flat and lasts for decades. Tools matter, and using them when you have mastered them is genuinely fun, but tool mastery is not the craft itself. Programming is the act of decomposing a problem precisely enough that an unthinking executor can carry out the solution. Code is just the current notation for expressing that thinking.

Leslie Lamport, the Turing Award winner behind LaTeX and TLA+, put it best:

"People confuse programming with coding. Coding is to programming what typing is to writing."

You can be a programmer without being a coder (though these days that would be super weird), and you can be a coder without being a programmer (this usually goes with having a YouTube channel).

That sounds kind of insane right? Programming is not coding? Well, let's take a look at the timeline.

The history of programming

The year is 1804. Joseph-Marie Jacquard builds a loom controlled by punch cards. There is no computer, no syntax, no compiler. There was however a problem definition:

Weave a specific pattern using a mechanism precise enough to carry it out given the right instructions.

Someone has to think through those instructions. That person is programming, in 1804! Or should I say, 145 BCE (Before Code Era).

In 1843, Ada Lovelace writes an algorithm for computing Bernoulli numbers on Charles Babbage's Analytical Engine, a machine that will never be built, proving that you don't even need Haskell to be a theoretical programmer.

In 1946 the ENIAC becomes operational. You program it by physically rewiring cables and setting switches on patch panels. There is no code. There is logic, expressed in copper and toggles.

Then come punch cards. Then assembly language in 1949, the first recognisable code, 145 years into the history of programming. Next came Fortran in 1957, which the machine code priesthood resists fiercely. Then high-level languages, then IDEs. At every step the notation changed. At every step, the thinking and the goal stayed the same.

In the two centuries of the history of programming, code covers barely the last third of it. The constant was never syntax. It was always the discipline of making thought precise enough to execute.

Why this distinction matters

The ability to write prose does not make you a novelist, and in the same way; The ability to write code does not make you a programmer. That applies regardless of whether you are a human or an LLM.

This is what makes the current "AI" debate so frustrating to watch. One camp says LLMs can write code, therefore software engineering is solved. The other says the code an LLM produces is too buggy to trust. Both sides have accepted the same flawed premise: that programming is the production of code. Neither has stopped to notice that they are arguing about is typing.

What LLMs actually are

LLMs rightt now are coders. Very fast, very prolific coders. They can produce syntactically correct, idiomatic code in dozens of languages on demand. That is genuinely useful, in the same way that a printing press is genuinely useful to a novelist. But nobody would call a printing press an author.

If that still sounds philosophical, the time-allocation data points the same way. IDC research found that developers spend only 16% of their time writing code. That does not mean code is unimportant. It means most engineering effort lives elsewhere: requirements, design, debugging, testing, communication, and understanding. That is the programming.

What changes

Fred Brooks called this distinction essential vs. accidental complexity back in 1986: the hard part of building software is the specification, design, and testing of the conceptual construct, not the labour of representing it. Code was accidental complexity. LLMs are removing it.

What remains is the essential complexity that was always underneath: problem modelling, domain understanding, algorithmic thinking, knowing when a solution is correct and when it just looks correct. For most of programming's history, the coding was hard enough to obscure this. Now the coding is cheap, and you can see who was actually programming and who was just typing.

The people who understood what they were doing are the ones benefiting most from the shift. They know when an LLM fits the problem and when it doesn't, because choosing the right tool is part of the programming. A carpenter does not use a hammer to drive in a screw.

Even if LLMs eventually reach human-level reasoning, that does not make programming a single-player game. The largest software project in history, the Linux kernel, is built by thousands of contributors with different mental models, different domain expertise, and different ways of seeing the same problem. That is not a limitation to be engineered away. Research on collective intelligence consistently shows that a mix of diverse thinkers outperforms any single thinker, no matter how capable. The same logic extends to human and machine thinking: a programmer who can combine their own judgment with an LLM's pattern-matching is likely to outperform either working alone. The coordination, the negotiation of tradeoffs, the integration of perspectives; that is programming too, and no amount of faster code generation makes it go away.

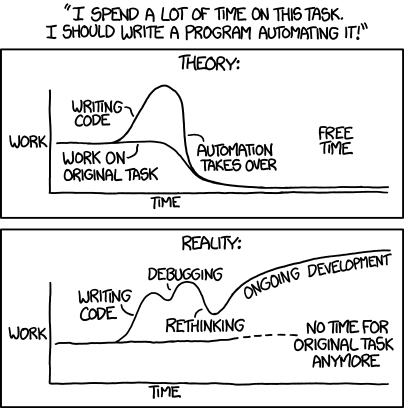

For a long time, automating one-off tasks often took longer than just doing them once. That old tradeoff is real, and xkcd captured it perfectly.

I have personally found that LLM-generated code has made me re-evaluate that. And building these quick one off tools brings that same joy of coding, especially when you can use an LLM to get unstuck.Good woodworkers do not just use tools well. They also build their own: a jig for one awkward cut, a guide for one exact joint, a rack of specialised chisels in different sizes for different jobs. Language models lower the barrier to doing that in software: building custom one-off tools, trying them, testing them, and keeping the ones that earn a place in your kit.

Don't be a coder, be a programmer

Programming survived punch cards. It survived assembly. It survived every language, framework, and paradigm that came and went over the past two centuries. It survived because it was never about the use of a particular tool. It was about the thinking.

Code is just the latest notation,and even if we get to a world where it is no longer the default way we express programs; If you are a programmer, that should not worry you. You have a skill that has outlived every tool ever built to express it.

So whether you are starting out, or you are a coder who now realises you need to become a programmer: don't just learn to code. Learn to program. Learn to decompose problems, model domains, map functionality back to real user needs, and understand the machine you are programming for. The how has changed a dozen times already. The what never has.